Enterprise AI Integration & Safety

Move AI from demo to production. I build secure, reliable AI workflows that integrate seamlessly with your existing business tools.

We are moving from the age of chatbots to the age of Agentic AI. The 2026 technological landscape puts a premium not just on capability, but on the control, evaluation, and safety of autonomous systems at scale.

I bridge the gap between experimental generative models and reliable business software. Integrating robust engineering scaffolding with advanced safety protocols, I help organizations deploy agents that are both powerful and predictable.

The 2026 Strategic Context: Agency & Safety

As “Agentic AI” becomes the standard interaction model, organizations face new risks including Model Collapse (degradation from synthetic data loops) and Adversarial Adaptation (evolved jailbreaks like “Crescendo” attacks). My approach prioritizes the SecSRE (Security + SRE) framework to mitigate these risks while enabling innovation.

Core Engineering Competencies

Agentic Workflows & Model Context Protocol (MCP)

Implementing standards like MCP to give AI agents secure, structured access to your business tools.

- Autonomous Agents: Systems that verify their own work (e.g., “Analyze this dataset, generate a report, and verify against this schema”).

- Evaluation at Scale: Deploying frameworks that go beyond “pass/fail” to measure multi-signal feedback, user satisfaction, and technical correctness drift.

Hybrid Intelligence & Safety Architectures

Designing systems where AI augments human decision-making rather than replacing it, preventing catastrophic failure states.

- Human-in-the-Loop (HITL): Seamless escalation protocols for low-confidence agent actions.

- Supply Chain Security: Preventing “Package Hallucinations” where coding agents inadvertently introduce non-existent or malicious dependencies.

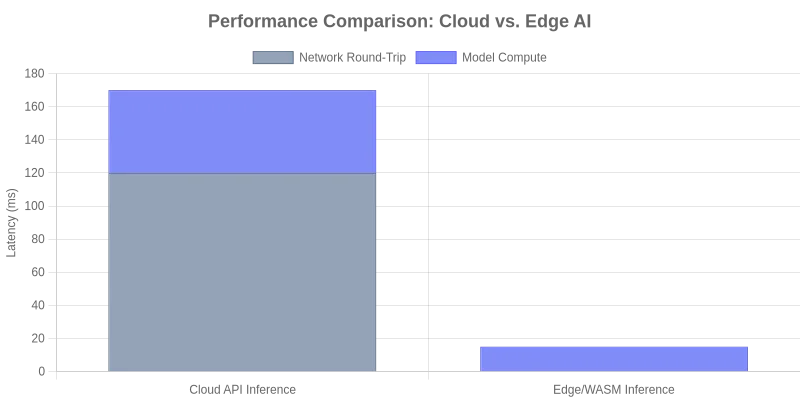

Edge AI & Privacy-First Inference

Running models directly in the browser using WebAssembly (WASM) and ONNX Runtime.

- Cost Efficiency: Offloading inference costs from expensive cloud GPUs to client devices.

- Data Privacy: Ensuring sensitive inputs never leave the user’s device.

Value Proposition

Many AI projects fail at the integration phase or succumb to unforeseen safety risks. My methodology treats AI models as high-risk distributed systems components—versioned, monitored, and integrated into standard CI/CD pipelines.

Key Deliverables

- Agent Defense Frameworks: Guardrails against multi-turn adversarial attacks (

Crescendo). - RAG Pipelines: Retrieval-Augmented Generation for grounding LLMs in your verified data.

- Cost-Optimized Inference: Hybrid edge/cloud architectures to reduce token spend.

- Evaluation Suites: Automated testing for hallucinations and drift using 2026-standard metrics.